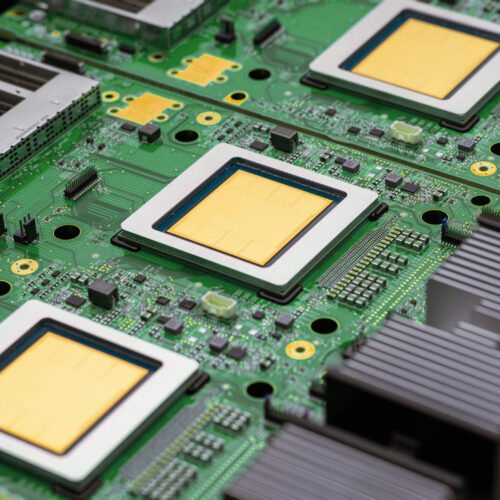

Google unveils two new TPUs designed for the "agentic era"

Summary

Google has introduced its eighth-generation custom chips called TPUs (Tensor Processing Units) designed for faster and more efficient AI work. There are two new types: TPU 8t for training AI models much quicker, and TPU 8i for running AI models more efficiently during use.Key Facts

- Google uses its own TPUs instead of Nvidia AI chips for its cloud AI services.

- TPU 8t is built to speed up AI training, reducing the time from months to weeks.

- TPU 8t clusters, called pods, contain 9,600 chips and 2 petabytes of shared memory.

- TPU 8t can scale up to one million chips working together in a single system.

- TPU 8i is designed for running AI models (inference) more efficiently, with larger pods of 1,152 chips.

- TPU 8i chips have three times more on-chip SRAM memory (384 MB) for faster processing of longer tasks.

- Both new TPUs use Google's custom ARM-based CPUs instead of older x86 CPUs, improving energy efficiency.

- Google aims to make AI training and use more efficient to reduce costs and power consumption.

Read the Full Article

This is a fact-based summary from The Actual News. Click below to read the complete story directly from the original source.